The rise of large language models (LLMs) has revolutionized how we interact with information. From generating creative text formats to translating languages, these powerful tools are transforming industries across the board. In the realm of communication, LLMs are now being deployed as AI chatbots, engaging users in natural language conversations about specific topics and domains.

But when it comes to crafting the perfect AI chatbot, two key approaches emerge: Fine-Tuning LLMs and Retrieval Augmented Generation (RAG). Understanding the nuances of each method allows developers to choose the optimal approach for their specific needs, unlocking the full potential of AI-powered chatbots.

Fine-Tuning LLMs: A Hands-On Approach

Fine-tuning involves taking a pre-trained LLM and further training it on a specific dataset tailored to the desired domain or task. This dataset may include documents, articles, and other resources relevant to the chatbot's intended purpose.

For users that are familiar with text2img models, like Stable Diffusion, finetuning has often been compared to generating Lora's

Benefits:

- High Accuracy: Fine-tuned LLMs excel at understanding and responding to prompts within their specific domain, offering a high level of factual accuracy and knowledge.

- Customizable: Developers can fine-tune LLMs to address specific needs and functionalities, tailoring responses to specific questions and scenarios.

- Controllable: The training process allows for precise control over the chatbot's behavior and output, ensuring it aligns with desired goals and objectives.

Drawbacks:

- Resource-Intensive: Fine-tuning requires significant computational resources and expertise, making it a costly and time-consuming process.

- Limited Adaptability: Fine-tuned LLMs may struggle to adapt to new information or situations beyond their initial training data.

- Static Knowledge Base: Changes in the domain or task require additional fine-tuning, hindering the chatbot's ability to keep up with evolving information.

Retrieval Augmented Generation: Leveraging External Knowledge

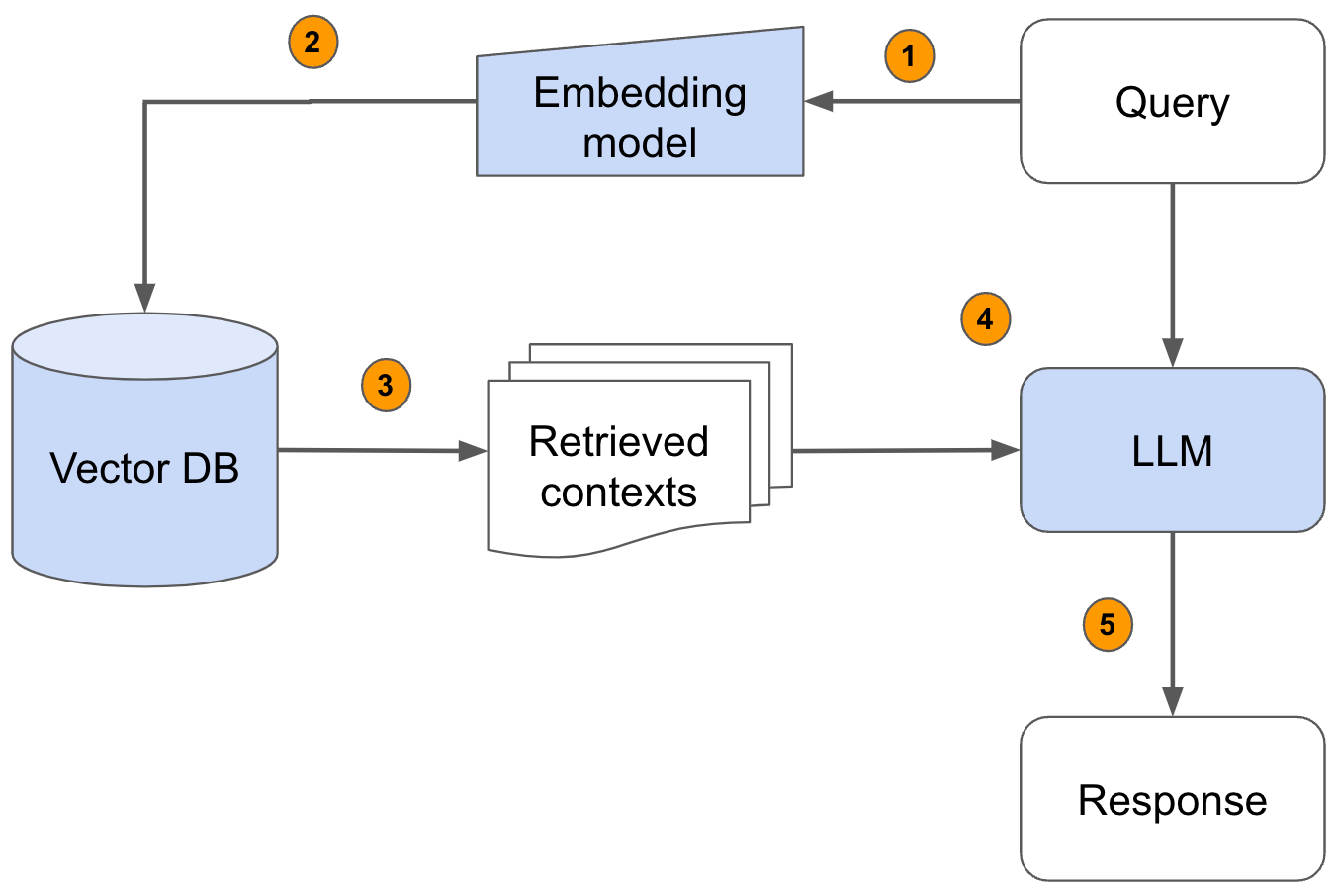

Retrieval Augmented Generation (RAG) takes a different approach. It utilizes a pre-trained LLM alongside a retrieval system that searches external sources for relevant information in real-time. This allows the chatbot to access a vast knowledge base beyond its initial training data.

Benefits:

- Dynamic Knowledge: RAG chatbots can access and process up-to-date information, ensuring their responses are relevant and reflect the latest knowledge.

- Adaptable: The ability to leverage external information allows RAG chatbots to adapt to new queries and situations more readily.

- Scalable: RAG's modular design allows for easy integration with various information sources and databases, enabling continuous expansion of the knowledge base.

Drawbacks:

- Lower Accuracy: While RAG offers wider access to information, the accuracy of responses may be less consistent compared to fine-tuned LLMs.

- Less Control: The reliance on external information can make it challenging to fully control the chatbot's responses and ensure perfect alignment with desired outcomes.

- Complexity: Building and maintaining a RAG system requires technical expertise and careful integration of different components.

Choosing the Right Approach: A Balancing Act

The optimal approach for your AI chatbot depends on its specific purpose and desired functionalities. Here are some key factors to consider:

For domain-specific applications requiring high accuracy and control, fine-tuning LLMs might be the preferred choice. However, if you need a constantly evolving chatbot that adapts to diverse queries and readily integrates new information, RAG might be the better option.

Ultimately, a hybrid approach can offer the best of both worlds. Combining fine-tuning for core domain knowledge with RAG for dynamic content access can create a powerful and versatile AI chatbot capable of handling complex interactions and delivering exceptional user experiences.